Research topics

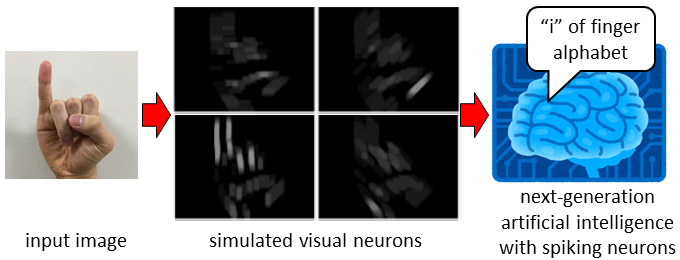

Image recognition using artificial intelligence composed of spiking neurons

Image recognition is still a difficult task although it has been studied intensively using artificial intelligence. The difficulty increases drastically when motion recognition is involved in addition to shape recognition. On the other hand, we humans can recognize the motion as well as the shape from visual information in an instant, and can communicate based on motion and shape, such as gesture, finger alphabet, and sign language.

The purpose of this project is to develop a next-generation visual recognition system by combining artificial intelligence composed of spiking neurons and an algorithm inspired by cortical visual neuronal networks that respond to a particular shape and motion. This approach will lead us to achieve a small translation system of gesture, finger alphabet, and sign language.

Real-time embedded AI system development, and its application to robot control

Although image recognition using conventional methods such as deep learning has achieved some success, it is computationally demanding and not suitable for implementation in small, non-powerful devices such as smartphones and robots. On the other hand, the brains of living creatures perform recognition in real time without difficulty, despite the fact that they are composed of neurons that are much slower than the semiconductors that make up computers.

The purpose of this project is to develop a compact and low-power consumption embedded AI system by combining neuromorphic technology, circuit design technology, and deep learning, and to develop a robot that can operate autonomously using the developed embedded AI system.

Visual aided control of a MAV inspired by insect vision

Small unmanned aerial vehicles (UAVs) are expected to fly and work automatically in situations where human activities are limited as shown in the figure. However, it is difficult to implement an automatic control system into a small UAV because the size and the power are limited. On the other hand, flying insects can recognize their own state, such as self-motion, travel distance, and danger of collision, from visual information that is processed by their very tiny visual nervous system.

The goal of this project is to develop a system that recognizes the self state inspired by the insect's visual nervous system, and to develop an automatic small UAV that can explore unknown indoor environment, where the GPS is unavailable.

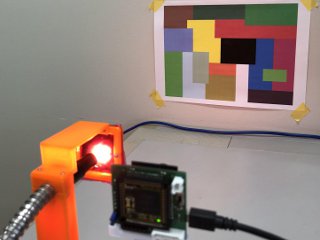

Image sensor system that is insusceptible to illumination changes

Conventional robotic vision systems have been suffered from visual information loss induced by a large change of light intensity and from alteration of color induced by the changing color of illumination because visual information is strongly influence by the characteristics of illumination. On the other hand, biological visual systems have excellent mechanisms that drastically decrease the influence of illumination, and support us to survive under a wide range of illumination conditions.

The porpose of this study is to develop an image sensor system that provides a stable visual information even under severe illumination changes. To achieve such a strength, the mechanisms of the biological visual system that cancel the change of illumination, such as adaptation, wide-dynamic-range coding, and color constancy, will be exploited.

Copyright © 2016 Neuromorphic systems laboratory All Rights Reserved.